ESS 3500 R Handbook, Lesson 12

Emily Schultz

2023-02-15

Lesson 12: Assumptions and Transformations

In this lesson, we will cover the assumptions of t-tests and other similar models, how to test those assumptions in R, and what to do when the assumptions are violated.

12.1 Conceptual background

When ever we use models, whether they are statistical models, mathematical models, or conceptual models, we make assumptions about the system we are trying to represent with those models. For statistical models, the assumptions we make are generally related to how our data were collected and the properties of the data (e.g., what type of variable: categorical, discrete, or continuous, the distribution of the variable, etc.). In this lesson, we will learn about the assumptions for t-test (and related models that we will learn later in the class), how to test whether our assumptions are met, and what to do if they are not. We will focus primarily on the assumption related to the properties of the data because the assumptions about data collection are related to topics we already covered when we discussed experimental design.

Assumptions about data collection

T-test and similar models have two main assumptions related to data collection:

- Samples are random

- Samples are independent

These assumptions are related to some of the concepts that we covered when we discussed experimental design. If you follow guidance for randomly (or at least haphazardly) selecting samples and correctly identify the number of independent samples based on your experimental design, you should be good to go here. Sometimes, though, we intentionally incorporate non-independent samples into our experimental design, such as when we use random block designs or paired designs. In this case, we have to adjust for that design in our statistical analysis. That is exactly what we do when we run a paired t-test for a paired experimental design. We account for the non-independence by running the test on the difference in the non-independent pairs, rather than the raw data. When we do this, it also reduces the degrees of freedom in the analysis (recall that the degrees of freedom for a paired t-test is based on the number of pairs rather than the total number of data points). This adjusts for the fact that our samples are not independent, and we do not have as much independent information in our data set.

Assumptions about data properties

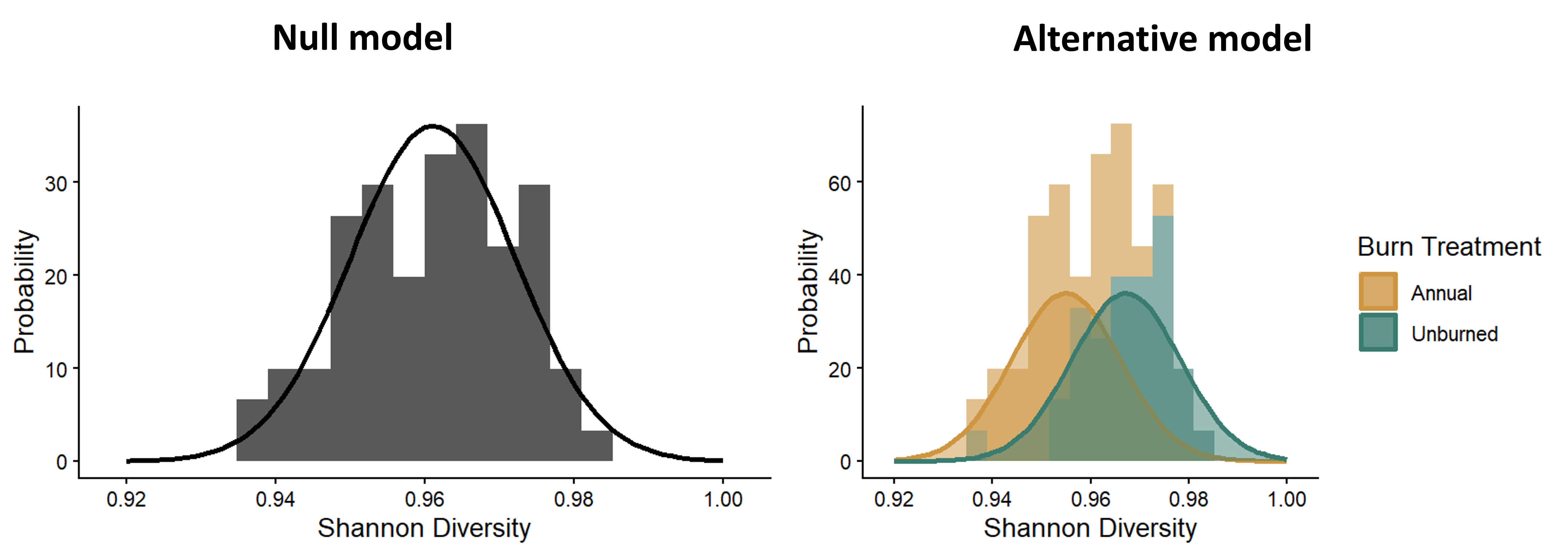

There are two additional assumptions of t-tests that are related to the properties of the data themselves. To understand these assumptions, let’s look back at the distribution graphs that represent out null and alternative hypotheses for a two-sample t-test, shown below. In these models, we specifically fit normal distributions to the data and test whether the data are best represented by a single normal distribution or two distributions with different means for each treatment group.

Distributions representing the null and alternative models for a t-test.

The the math behind the t-distribution we use to determine the p-value (the probability of a t-statistic greater than or equal to ours assuming the null hypothesis is true) is based on the expectation that a normal distribution is a good fit for our sample distribution. Furthermore, in a standard t-test, we allow the means to vary in the alternative model, but the variances are the same between the two distributions, which we can see in the figure. This is reflected in the calculation of the t-statistic itself. The variation term in the denominator of the t-statistic is the pooled variance across both groups. You don’t calculate separate variance terms for each group. These mathematical calculations of the t-statistic and t-distribution lead to our two assumptions related to the properties of the data:

- The data (specifically the residuals of the data, which we will discuss in the next section) are normally-distributed.

- The variances are equal between the two sample groups.

If you try to run a test if your data do not match these assumptions, the conclusion from the test might not be valid. We will dig into each of these assumptions in the sections below, focusing on how we test these assumptions and what we can do when the assumptions are not met.

Normally-distributed residuals

What are residuals?

The residuals are the leftover variation in our dependent variable, after we have accounted for the variation explained by our independent variable. What this means in the context of a two-sample t-test is that we calculate the average value for each of our treatment groups, and then calculate the difference between the mean of the group and each individual data point in each group. The difference between the means of the groups is the effect of the independent variable, and the different between the means and each data point is the leftover variation, or the residuals.

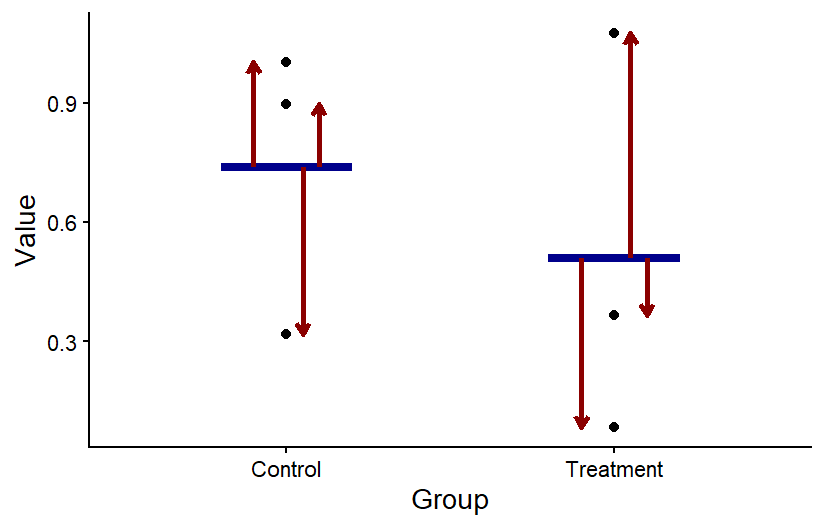

The graph below shows a visual representation of residuals. The points in the graph show the individual data points, the horizontal blue bars show the mean for each group, and the arrow showing the difference between the mean and each point represent the values of the residuals. When we run a statistical test, we can save the residuals from the output of the test to determine if they are normally-distributed.

The residuals for two sample groups. For each group, the value of the residuals is the difference between each data point and the mean of the group.

Visualizing residuals

One of the best ways to determine whether our residuals are normally distributed is to make graphs of the residuals saved from a test and do a visual check of the distribution. We will look at two types of graphs to assess the distribution.

The first type of graph you can make is one that you are already familiar with: a histogram. If you histogram your residuals, you can visual determine if the histogram looks like the bell-curve we would expect for a normal-distribution.

The second type of graph is called a q-q plot, or quantile-quantile plot. Quantiles are the boundary points when a set of data points is put in order and then divided into equal-sized subsets. A q-q plot graphs the quantiles that would be expected for a data set (such as the residuals from a statistical test) if it followed a normal distribution (or another type of distribution that you can specify) in comparison to the observed quantiles from the actual data set. If the values match, that indicates the actual data set does follow a normal distribution. Graphically speaking, this means that if you graph the theoretical residuals on the x-axis and the obersved residuals on the y-axis, the points will fall along the 1:1 line on the graph. If the points do not fall along the line, the residuals are not normally distributed.

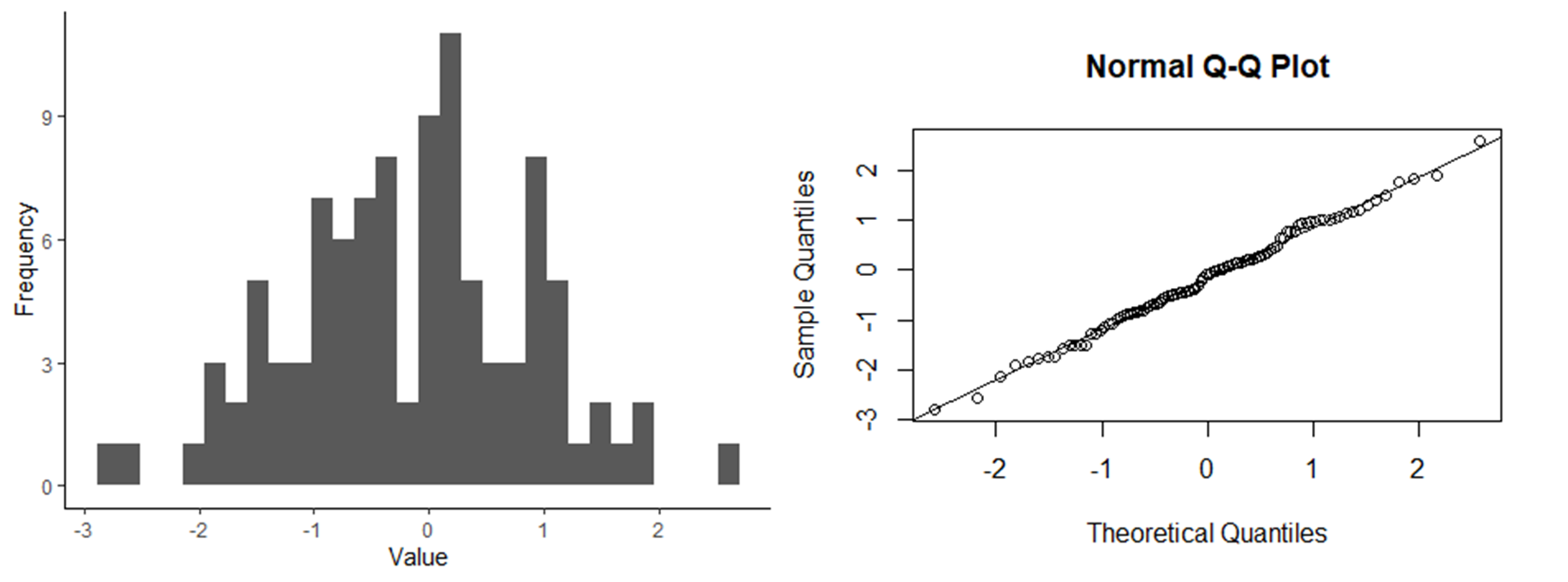

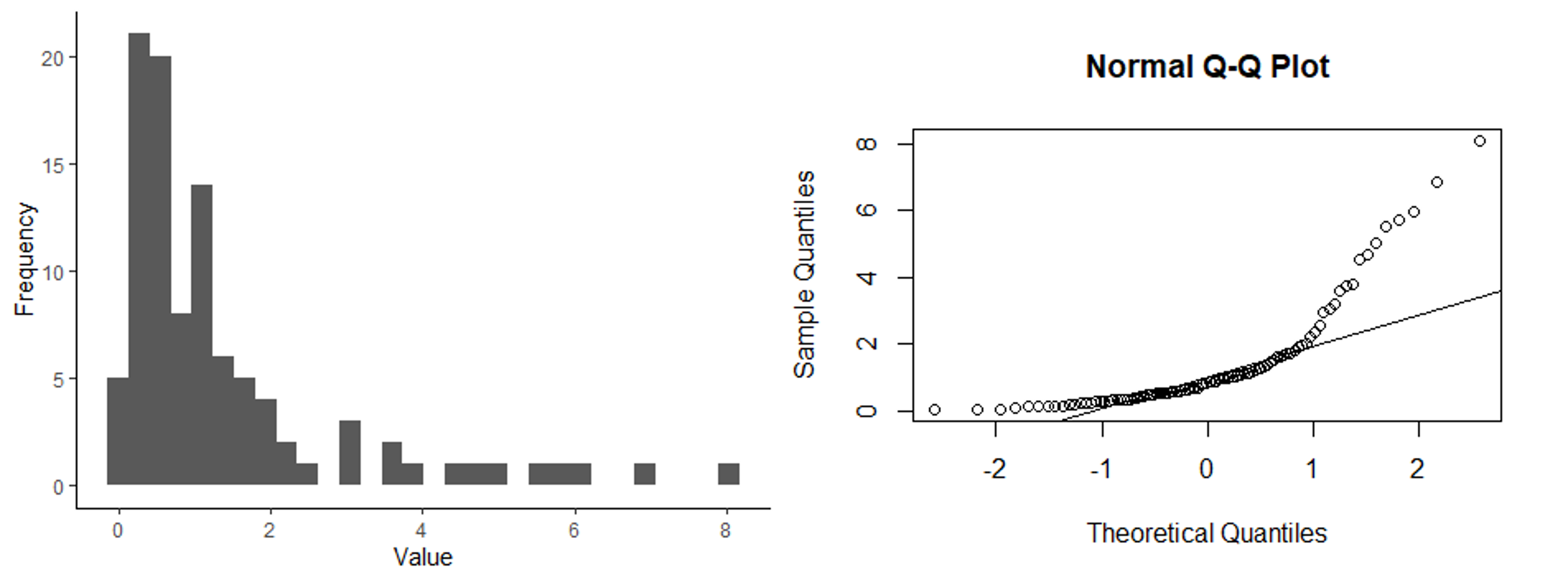

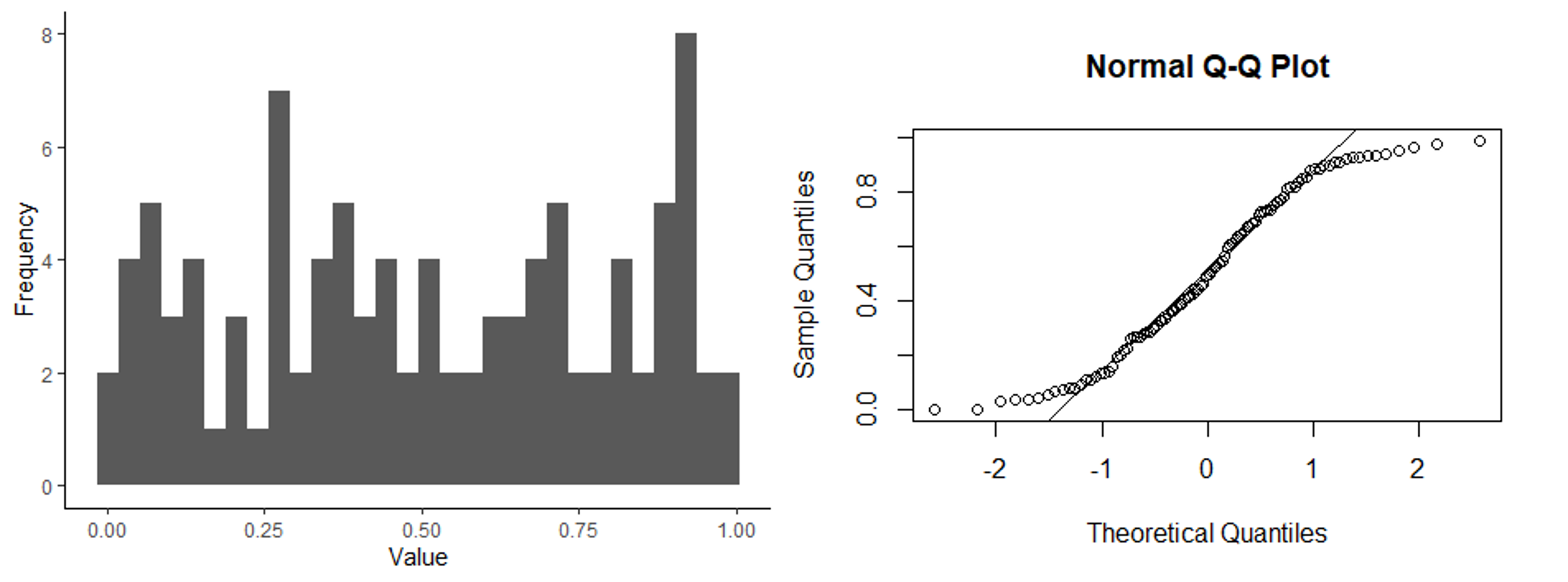

Below are three sets of graphs that show a histogram of a data set along with a q-q plot for the same data set, so you can see what a q-q plot would look like for differently-shaped distributions. In the first set of graphs, the residuals are close to a normal distribution, and the points in the q-q plot fall close to the 1:1 line that is shown in the graph. In the second set of graphs, the distribution is skewed. In the q-q plots, the points fall close to the line in the middle, but at either end, they fall above the line. When the points fall above the line at both ends or below the line at both ends, that is what indicates a skewed distribution. Finally, in the last set of graphs, the ends (tails) of the distribution are thicker (have higher frequencies) than would be expected for a normal distribution. This results in a q-q plot with points that are above the line at the low end and below the line at the high end. Of the distributions shown, it is the skewed distribution that is the biggest problem. If the residuals are not normally-distributed, but the shape of the distribution is symmetrical, such as in the third set of graphs, it is usually not a big problem.

Histogram and q-q plot of normally-distributed data.

Histogram and q-q plot of skewed data.

Histogram and q-q plot of a distribution with thick tails.

Formal test for normally-distributed residuals.

In addition to visually assessing the distribution of your residuals, there are statistical tests that can be used to test whether residuals follow a normal distribution. In this class, the test we will learn for this is called a Shapiro-Wilks test. Like with most of the other tests we work with in this class, when we run this test, we start with our null and alternative hypotheses for the test. In this test, we are not making comparisons between variable, but instead we are comparing the distribution of a data set to a normal distribution. Therefore our hypotheses would be:

- Null hypothesis: the data are normally-distributed.

- Alternative hypothesis: the data are not normally-distributed.

We can then run the test, using the residuals saved after running a statistical test, which will give us a p-value. If the p < 0.05, we would reject the null hypothesis and conclude that the residuals are not normally-distributed.

While it might seem like a formal statistical test like this is the best approach for ensuring that our residuals are normally-distributed, I urge you to use and interpret this test with caution. Like other statistical tests, it is sensitive to the sample size of the data set. If you have a large data set, the test will have a lot of power to detect even small deviations from a normal distribution, even if the deviations are small enough that they aren’t a problem for running the test. In other words, the test is overly-sensitive. On the flip side, if you have a very small sample size, the test will not have a lot of power to detect deviations from a normal distribution, and you might fail to reject the null hypothesis, even if the residuals of your data are a problem.

In this class, if you want to use visual assessments and not run the Shapiro-Wilks test to check the assumption of normally-distributed residuals, that is fine (that is usually my approach). If you do run a Shapiro-Wilks test, however, you should not rely on that approach on its own. You should also use a histogram or q-q plot to do a visual check.

Equal variances

Visualizing variances

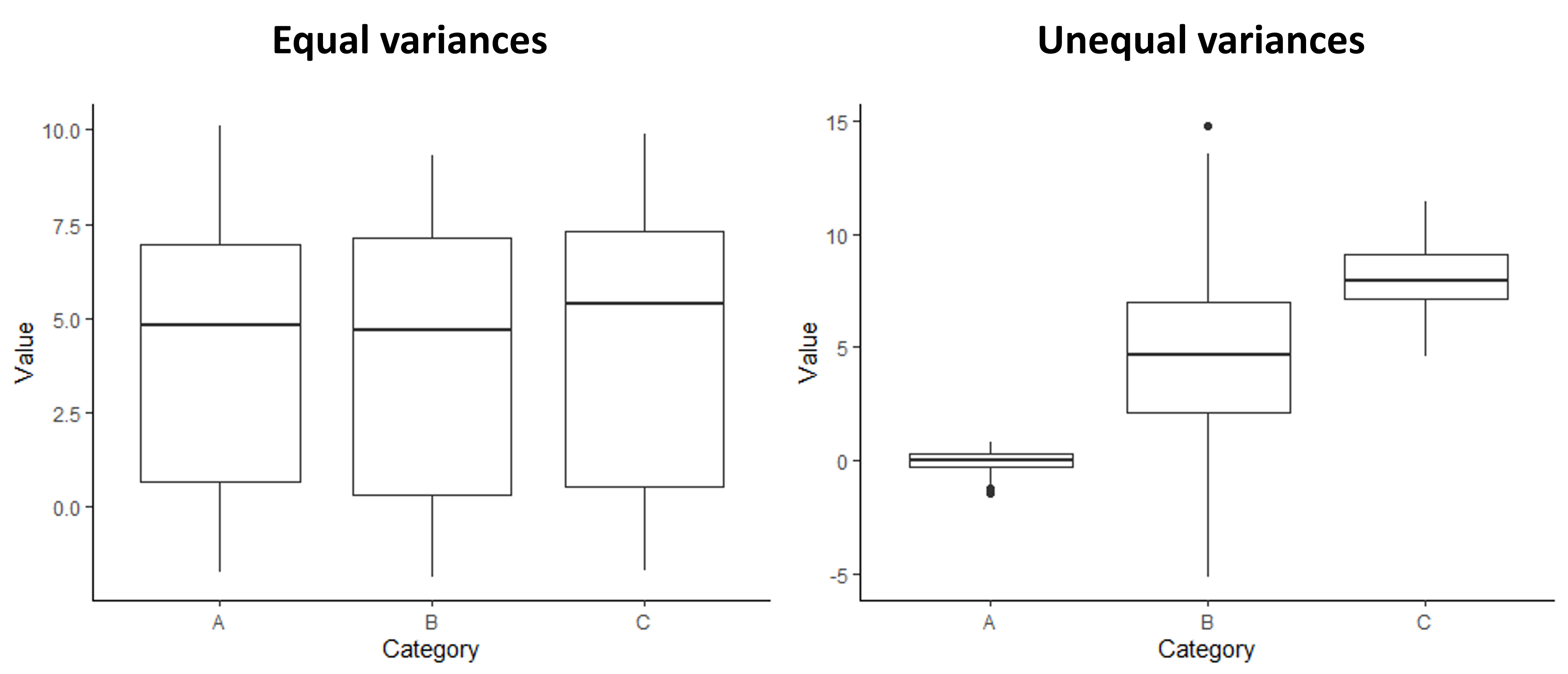

Like with the assumption of normally-distributed variables, one of the best ways to check for equal variances is to graph the data and do a visual check. Good news: you already know a useful graph for this check! Boxplots are great for comparing the variation between sample groups. If you make a simple boxplot, you can compare the height of the boxes and whiskers between your groups to make sure they are roughly equal. The graphs below show an example of what a boxplot would look like for groups with equal variances compared to groups with unequal variances.

Examples of boxplots showing groups with equal variances and unequal variances

Formal tests for equal variances

There is also a formal statistical test for comparing the variances across groups. It is called the Levene’s test. The null and alternative hypotheses for a Levene’s test are:

- Null hypothesis: The variances are equal across the groups.

- Alternative hypothesis: The variances are not equal across the groups.

For this test, we use our original data set (rather than the residuals saved after running a statistical test) because we are specifically comparing between groups, rather than looking at the total leftover variation after accounting for differences between the groups. If the test gives us a p-value less than 0.05, we would reject the null hypothesis and conclude that the variances are not equal.

This test comes with the same caution as the Shapiro-Wilks test. It can be overly-sensitive if you have a large sample size and not sensitive enough if you have a small sample size. Therefore, I am again fine with you only using a visual check (with a boxplot), but if you do run the test, you should be sure to do a visual check as well.

Addressing violations of assumptions

Tests like t-test, as well as the other tests we will cover in the rest of this class, are fairly robust to violations of the assumptions. If there are small deviations from normality or small differences in the variances of the groups, it is usually not a problem. However, if our data have residuals that deviate strongly from a normal distribution or if the variances are very different between groups are not equal, the results of a t-test might not be valid, so it is best to try to address the problem in some way.

If you do decide the violations of the assumptions are strong enough, there are a few strategies we can try to address the problem:

- Data transformations

- Remove outliers

- Use a different test

We will go through each of these options below.

Data transformations

Sometimes if our residuals are not normally-distributed or if our variances are unequal, we can fix the problem by transforming our data. In other words, we can apply a function to the data that will change the shape of the distribution so that it is normal or equalize the variance. If I think I have a problem with my assumptions, data transformations are usually the first approach I try to deal with the problem because they are easy and you do lot lose any power in your test with this approach.

Probably the most common type of transformation that we use in ecology is a log transform. This can be effective if our data are skewed (have a log-normal distribution), which is common for many types of ecological data. This can be effective because of the relationship between normal and log-normal distributions. By definition, if you have a data that follow a log-normal distribution, the logarithm of those data are normally distributed. To run a log-transform, you simply take the natural logarithm of your dependent variable, and then you run the statistical test on the log-transformed data instead of the raw data. You should then save the residuals of the new test, and double-check that they are normally distributed. If they are, the transformation worked, and you can legitimately interpret the results of the test using the log-transformed data. If the new residuals are still not normally-distributed, then you should try a different approach.

For correcting the problem of unequal variance, you can try a square-root transform. It is a similar idea to a log-transform, but you instead that the square root of your data. You can then just the variances again. If they are equal for the square-root-transformed data, you can then proceed with a t-test using the square root transformed data.

Although a log transform usually works best for skewed residuals and a square-root transform usually works best for unequal variances, if the first transform you try doesn’t work, I would recommend trying the other transform. Sometimes you get surprised!

One downside of log transforms and square-root transforms is that you cannot take the log or square root of negative numbers, and you cannot take the log of zeros. Therefore, these approaches do not work if you have those values. Sometimes if you have a few zeroes (and no negative values) and you want to try a log transform, you can add a small value (such as 0.01) to those zeros and then do the log transform. This can sometimes do weird things to the distribution, though, depending on how the log of the small values compares to the rest of the values in your data set. I would also not recommend this approach if you have a lot of zeros in your data set.

If a data transformation does not work or cannot be done with the type of data you are working with, you can move on to a different approach.

Remove outliers

Another strategy you can use is to remove outliers - data points that fall far outside the range of the rest of your data set. This can address violations of your assumptions if the residuals are not normally-distributed due to a few unusual data points or if the unequal variances are due to outlier points in one of the groups. To use this approach, you would remove the data points from the data set and run the test without those values.

While this approach can solve some types of problems, think hard before trying this approach. Removing data is not something that should be done lightly. Some sources might recommend removing outliers if they are more than two standards deviations away from the mean. I, however, do not like a blanket approach like that because outliers can by important data. I usually only remove outliers from my data set if I have additional information (aside from simply the fact that the point is an outlier) that tells me something is unusual about the data point. For example, when I was measuring the movement of ants on different surfaces for my senior thesis project, I made a note that I accidentally got glue on the the leg of one of my ants. When the movement of that ant turned out to be very different from the other individuals, I felt comfortable removing that data point because I knew something had happened during the experiment that would reasonably cause it to be unusual. (This is one reason it is important to take good notes when you collect data!)

If you do decide to use this approach, you should be clear about your reasoning for removing the points you choose to remove. You should also not remove the points from your original data set (you want to preserve all of the data you collected, even if you don’t use it in a particular analysis). Use R to create a new data frame with the points removed, but don’t change the spreadsheet where the data are stored.

Run a different test

Not all statistical tests have the same assumptions. The assumptions of a test depend on the math underlying that test. If something like a data transformation does not work to address violations of assumptions, and you think the problem is big enough that you are not comfortable running a standard t-test, you can use a different type of test for your analysis. We will cover two such tests below, one for residuals that are not normally-distributed and one for unequal variances. Note, though, that these are not the only alternative tests out there. For residuals with non-normal distributions, in particular, there are tests that assume other types of distributions. In fact, many of the types of tests we will learn in this class can, with tweaks, be extended to use different distributions that might be better matches for the types of data we are working with.

Mann-Whitney U Test

The Mann-Whitney U test is similar to a t-test, but it can be used when the residuals are not normally-distributed (and often when variances are unequal because sometimes skewed distributions can lead to unequal variances). In fact, the Mann-Whitney U test is a type of non-parametric test, meaning it does not assume a particular distribution.

The way the test works is that instead of using the specific values from your data set, it ranks all of the values from your full data set (both groups lumped together) and then it compares the mean rank of the data points between your two groups. The way I think of it is that the Mann-Whitney U test is to the t-test the way the median is to the mean. Just like the median is a better measure of central tendency when you have skewed data, the Mann-Whitney U test is a better statistical test if you have skewed data.

For a Mann-Whitney U test, the null and alternative hypotheses are then:

- Null: there is no difference in the mean rank of data points between the two groups.

- Alternative: there is a difference in the mean rank of data points between the two groups.

From there, it is similar to running a t-test. You will get a p-value that you can use to draw your conclusion. As always, if the p-values is < 0.05, you reject the null hypothesis.

A couple words of caution about Mann-Whitney U tests:

- While these tests can work better than a t-test if your residuals are not normally-distributed, extreme skew in your residuals is still a problem for a Mann-Whitney U test.

- Because this test is based entirely on the rank of the data points, and not the values themselves, you lose a lot of information, and often power, from your data when you use this type of test. Therefore, while it may seem easier to just run this type of test as the default and not bother with the annoying assumptions testing and trying out transformations, that is not a good approach. Only choose this option if you have to. If your original data do not match the assumptions, you should first try a transformation before resorting to a Mann-Whitney U test.

Welch’s test

A Welch’s test can be used in place of a t-test when the residuals are normally-distributed but the variances are not equal. It is almost identical to a t-test, but when the variance is incorporated into the t-statistic, instead of calculating a pooled variance, the variance is calculated separately for each group. Otherwise, the hypotheses, implementation and interpretation are the same as for a regular t-test.

Unlike with a Mann-Whitney U test, you do not lose information when you run a Welch’s test. The degrees of freedom will take a small hit on account of calculating separate variances for each group instead of a single variance. However, the loss of power is minimal, so it is less problematic to use this approach as your default (in fact, the default in R when you run the t-test function is to run a Welch’s test). However, if I notice that the variances are equal when I visualize my data, I still run a regular t-test.

Final thoughts

Deciding how to handle violations of assumptions can be challenging because, to a large degree, the process requires judgement calls about whether the problem is big enough that it needs to be addressed and what the best approach is to handle the problem. To a large degree, good judgement about assumptions comes with experience. However, I do have some tips to help you with the decision making process. Here are some things you should be thinking about as you work through the process:

- What is the sample size? The larger the sample size, the more robust the test are to violations of the assumptions.

- For the residuals: What is the specific shape of the residuals. If they are skewed, that is a bigger problem. If they just have fat or thin tails, but are still symmetrical, it is probably fine to run the test.

- For the variances: Are the sample sizes of the group equal. Unequal variances are a bigger problem is the sample sizes of the groups are very different from each other.

- How easy is it to improve the problem, and what are the consequences of the fix? Data transformations are an easy fix, and you don’t lose power, so if the distribution is a little off and/or the variances are different, it can be worth a try to do a transformation to see it it improves things. A Welch’s test doesn’t result in a major loss of power either, so I wouldn’t hesitate to use that if I was unsure of the variances. A Mann-Whitney U test, on the other hand, involves a loss of information, so I would only use this approach if I was pretty concerned.

Finally, there can be circumstances where nothing seems like a great option. There are other options out there that you can explore, but in this class, it would be acceptable to run the test but interpret the results with some skepticism and be clear about the problems. In general, when you are making judgement calls about the data analysis process (remember that there are usually subjective decisions that go into the process, particularly with more complex analyses), you should clearly document your process and your reasoning. Other researchers might not always agree with your approach, but if you are honest and thorough with your explanations, they can use their own judgement in interpreting your results.

12.2 Testing assumptions in R

For this lesson, we will work with a new data set that contains data from an experiment that was done to test for the effect of invasive grass removal on the plant community. As a first pass, the researchers simply wanted to know if removing the grasses from the plot at the start of the growing season was effective for reducing the invasive grass cover in the plot at the end of the growing season. You will work with two variables from this data set. Your independent variable is weed removal (the name of this variable is “Weed” in the data set), and your dependent variable is grass cover (the name of this variable is “Grass_cover” in the data set). Because we have a categorical independent variable with two values and a continuous dependent variable, a t-test is an appropriate test for these data, if the assumptions are met.

Download the InvasiveGrass.csv file from Canvas. Set your working directory in R to the folder that contains the data file.

Then load your data file into R using the usual ‘read.csv’ function. I am calling the data object “grass” for this example.

grass <- read.csv("InvasiveGrass.csv")We will also use the ggplot2 package for this lesson, so load that package now as well:

library(ggplot2)Testing for normality

The assumption normality for t-tests is that the residuals (the leftover variation that is not explained by our model) are normally distributed. Therefore, in order to test this assumption, we need to first run our model and save the residuals to test.

When we run the t-test this time, we will use a different function

(the lm function) than we practiced in the previous lesson.

We use the ‘lm’ function instead of the ‘t.test’ function because the

‘t.test’ function doesn’t save the residuals from the test. However, the

models built by the two functions are exactly the same, so the residuals

are the same regardless of the approach we use to test the models. We

can choose to use either function after checking our assumptions. We

will store the test output in an object called “grass_ttest”.

Conveniently, the required arguments for running a t-test using the

lm function are the same, and can use exactly the same

format, as the t.test function we learned before.

grass_ttest <- lm(Grass_cover ~ Weed, data = grass)We will now save the residuals from the model using the

resid function. Then we will store the residuals in a data

frame, so we can graph them using ggplot. We will store the

residuals in a data frame called “resids” and the variable name within

the data frame will be “Residuals”.

# Save residuals

grass_resid <- resid(grass_ttest)

# Store residuals in a data frame

resids <- data.frame(Residuals = grass_resid)Now that we have saved our residuals, we can move on to testing whether they are normally distributed.

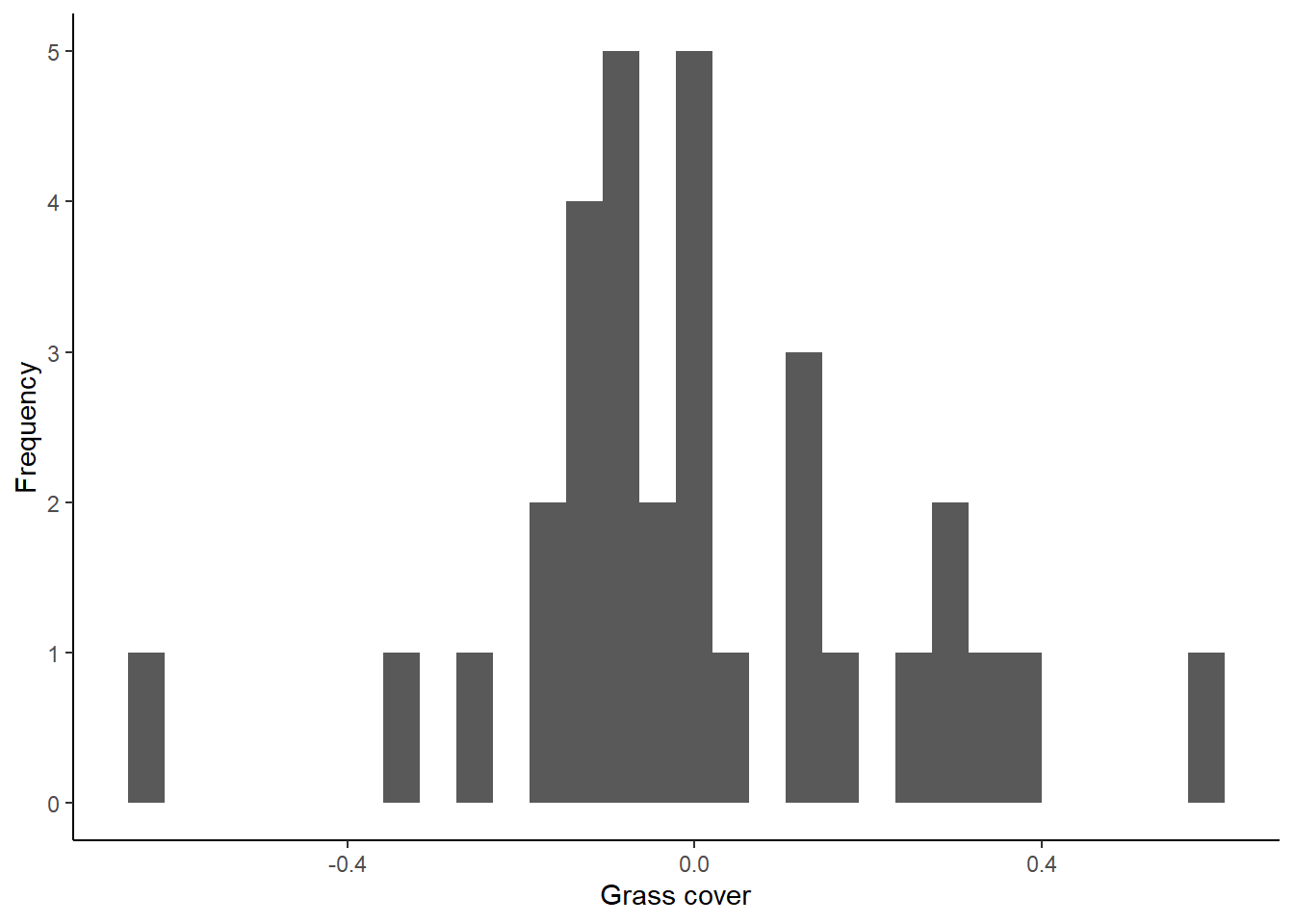

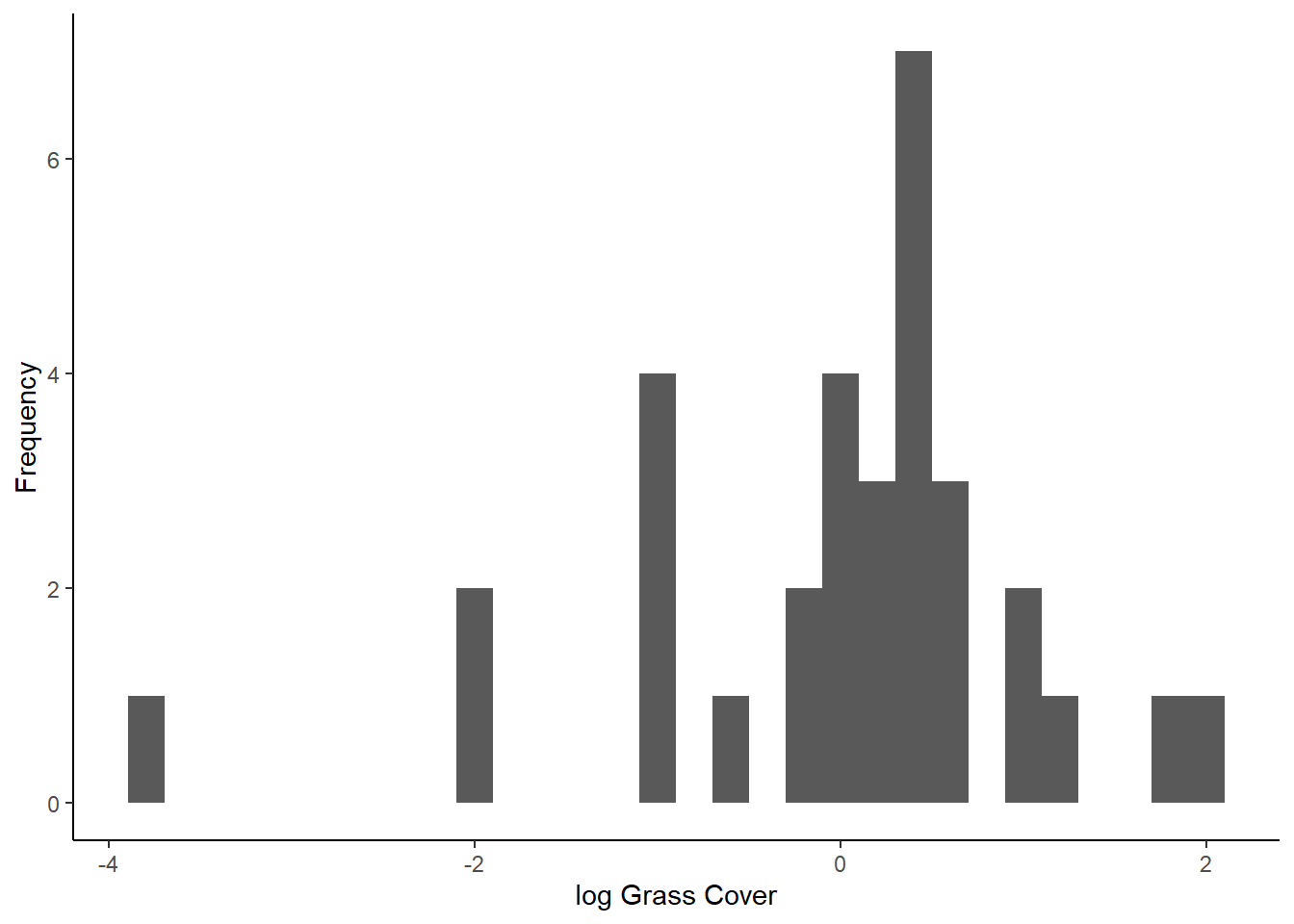

Histogram of residuals

The first way to check for normality of residuals is to make a histogram to determine if the residuals look like they are normally distributed, or at least symmetrical. We will makes this graph using the residuals that we saved from our model in the previous section.

ggplot(resids, aes(x=Residuals)) +

geom_histogram() +

labs(x="Grass cover", y="Frequency") +

theme_classic()## `stat_bin()` using `bins = 30`. Pick better value with `binwidth`.

Do the residuals look normally distributed?

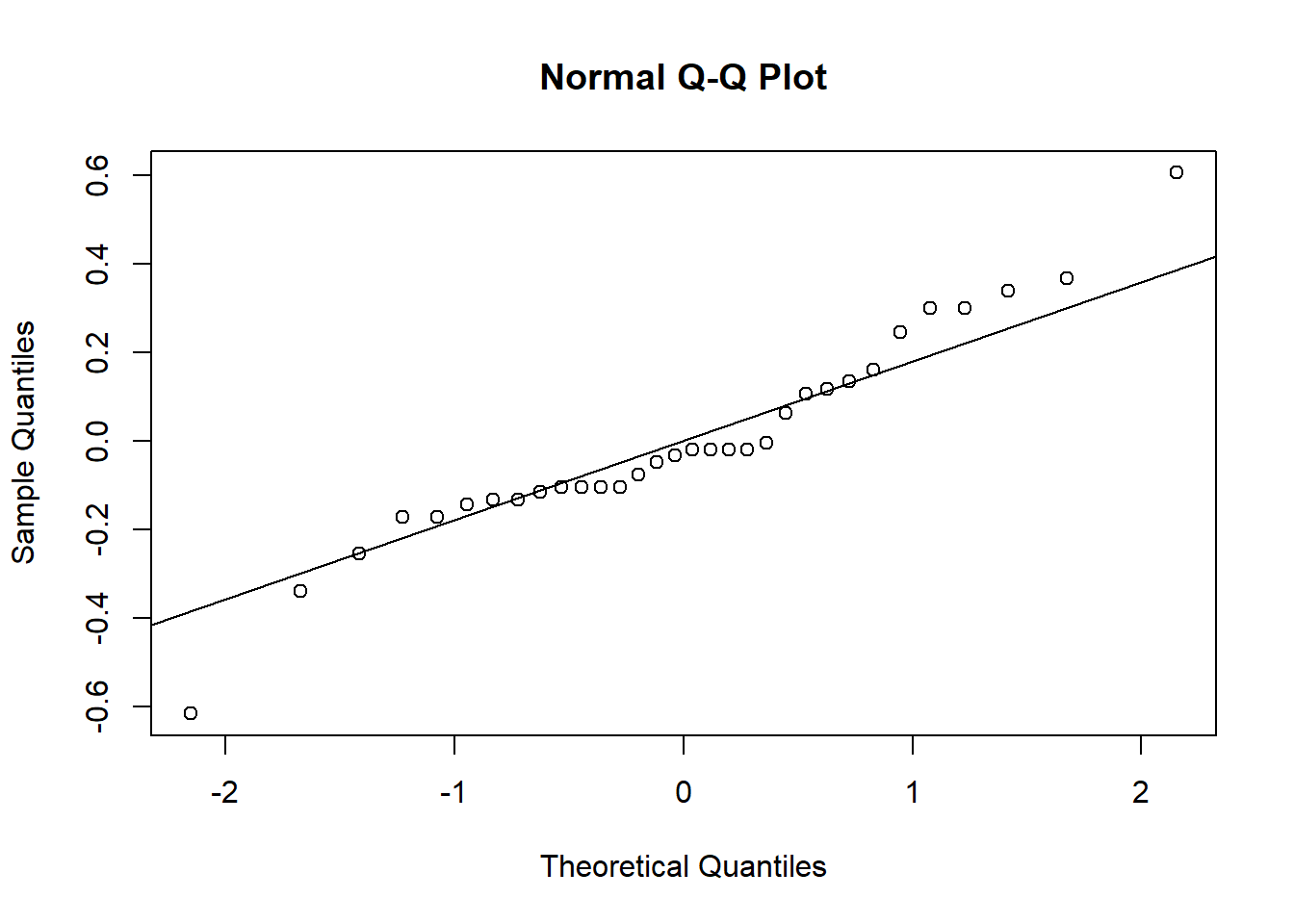

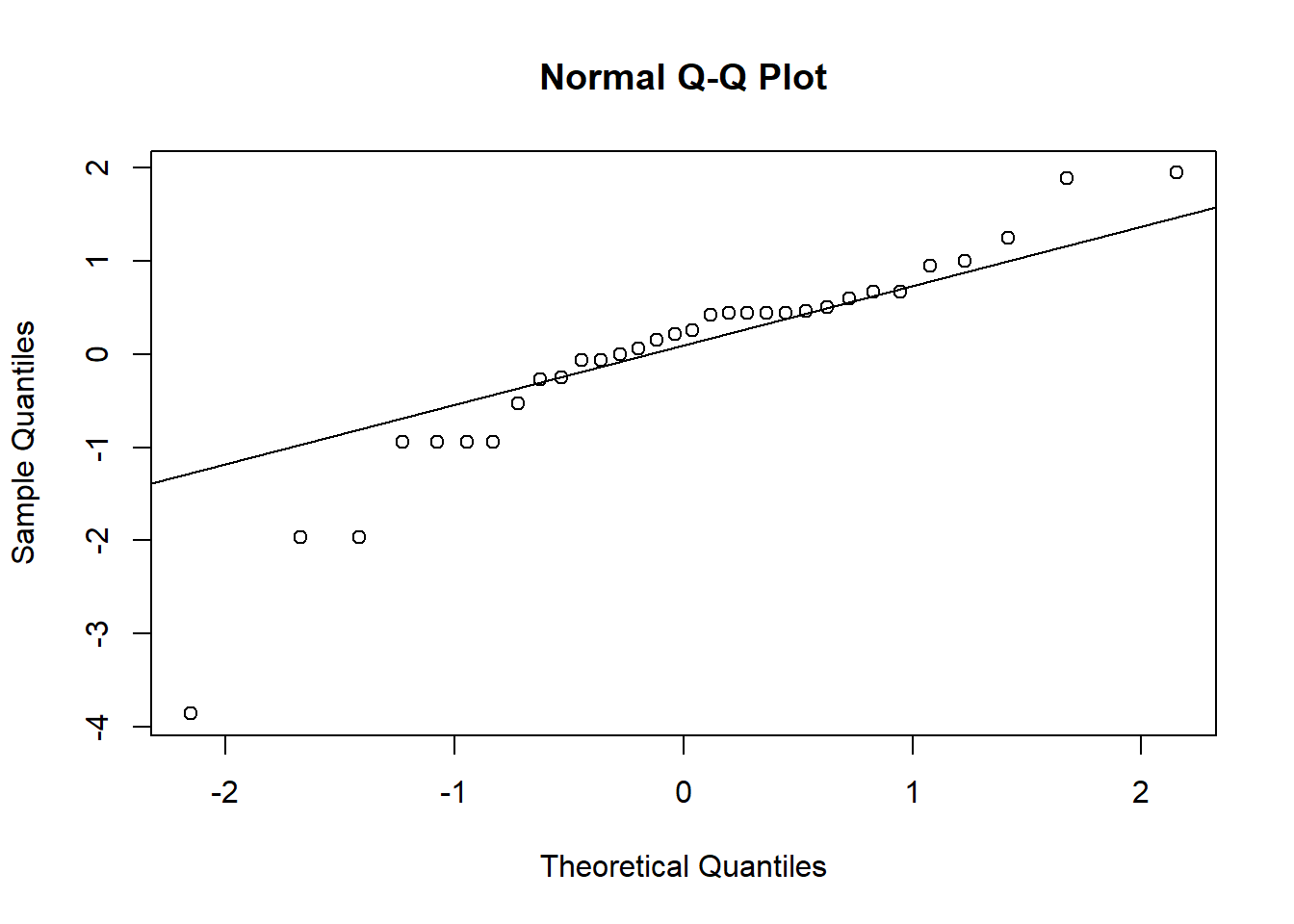

qqplot

There is another type of graph that is useful for visualizing the normality of residuals, called a qqplot. This type of graph compares the residuals from a model with residuals that would be predicted based on a normal distribution. If we graph the residuals from our model against the predicted residuals, we would expect them to form a straight line if the residuals are normally distributed. If they do not form a straight line, that suggests the residuals are not normally distributed.

To make a qqplot to test for a normal distribution, we can use the

qqnorm function. Then we can use the qqline

function to add a reference line to the plot, showing where the points

should fall if the data are normally distributed. The input (argument)

for both of these functions in the object with our saved residuals

(grass_resid).

qqnorm(grass_resid)

qqline(grass_resid)

When the points fall close to the line like this, that means that the distribution of our residuals is close to normal. Based on both types of graphs, it looks like our data are normally distributed, but we’ll cover a formal test for this as well.

Shapiro-Wilks test

The Shapiro-Wilks test is a formal test to determine if a distribution is normal. Our null hypothesis for this test is that the residuals are normally distributed.

Running the test is pretty straightforward. We will use the

shapiro.test function, and the only argument we need to

input is our residuals from the models we built.

shapiro.test(grass_resid)##

## Shapiro-Wilk normality test

##

## data: grass_resid

## W = 0.95069, p-value = 0.1507Based on the output of the tests, are the residuals normally distributed?

Remember, though, there are downsides to this test. When you interpret the results, be sure to interpret them in the context of your sample size.

The log transform

We have established that our data are normally distributed, but what if they weren’t? What would we do about it?

If our residuals are not normally-distributed, particularly when we have skewed data, often a good option is to log transform our data. The log-transformed data will often be more normally distributed, and if they are, we can run our test on the log-transformed data. Even though our residuals are okay in this example, let’s try this transformation on our grass data and see what effect it has, just for practice!

There is one catch before we do the log transform: we have some zeroes in our grass cover data, and the log of zero is undefined. Because there are only a few zeroes, we will add a small values to these zeroes before doing the transform. Based the the grass cover values, which are mostly between 0 and 1, we will use 0.01 as our small value.

To add this value, we first have to figure out which grass cover values are equal to zero. We can use the handy ‘which’ function, which tells us which values in a vector fit a particular criteria (in this case, being equal to zero). We have to input the data frame and variable names (‘grass$Grass_cover’), as well as the criteria (‘==0’). We will save the position of the values that fit our criteria in an object called “indexes”.

indexes <- which(grass$Grass_cover==0)We can use the indexes to change the values from zero to 0.01. In the code below, ‘grass$Grass_cover[indexes]’ will tell R to pull out the values that we identified as being equal to zero, and ‘<- 0.01’ will change them to 0.01.

grass$Grass_cover[indexes] <- 0.01Now we can log-transform our data and add a new column to the grass data frame to store our log-transformed grass cover data. I am calling the new variable “log_Grass_cover”.

grass$log_Grass_cover <- log(grass$Grass_cover)Finally, we will check to see if the log transformed data are normal, using the same approach as above.

First, run the t-test, this time using “log_Grass_cover” as our dependent variable, save the residuals, and put them in a data frame.

grass_ttest_log <- lm(log_Grass_cover~ Weed, data=grass)

resid_log <- resid(grass_ttest_log)

resids_log <- data.frame(Residuals = resid_log)Now, we will make our graphs to check normality. Start with the histograms:

ggplot(resids_log, aes(x=Residuals)) +

geom_histogram() +

labs(x="log Grass Cover", y="Frequency") +

theme_classic()## `stat_bin()` using `bins = 30`. Pick better value with `binwidth`.

Next, make the qqplots:

qqnorm(resid_log)

qqline(resid_log)

And finally, we’ll run the Shapiro-Wilks test on the new residuals:

shapiro.test(resid_log)##

## Shapiro-Wilk normality test

##

## data: resid_log

## W = 0.89604, p-value = 0.004918Because our residuals were okay to begin with, after log-transforming the data makes them look worse! It’s definitely better to proceed with the un-transformed data so far, but we need to check our other assumption first.

Testing for equal variances

Another assumption of linear models is that the variance of our dependent variable is equal for different values of our independent variable.

For this one, we do not need to run the models first to save residuals, so let’s jump right into the testing.

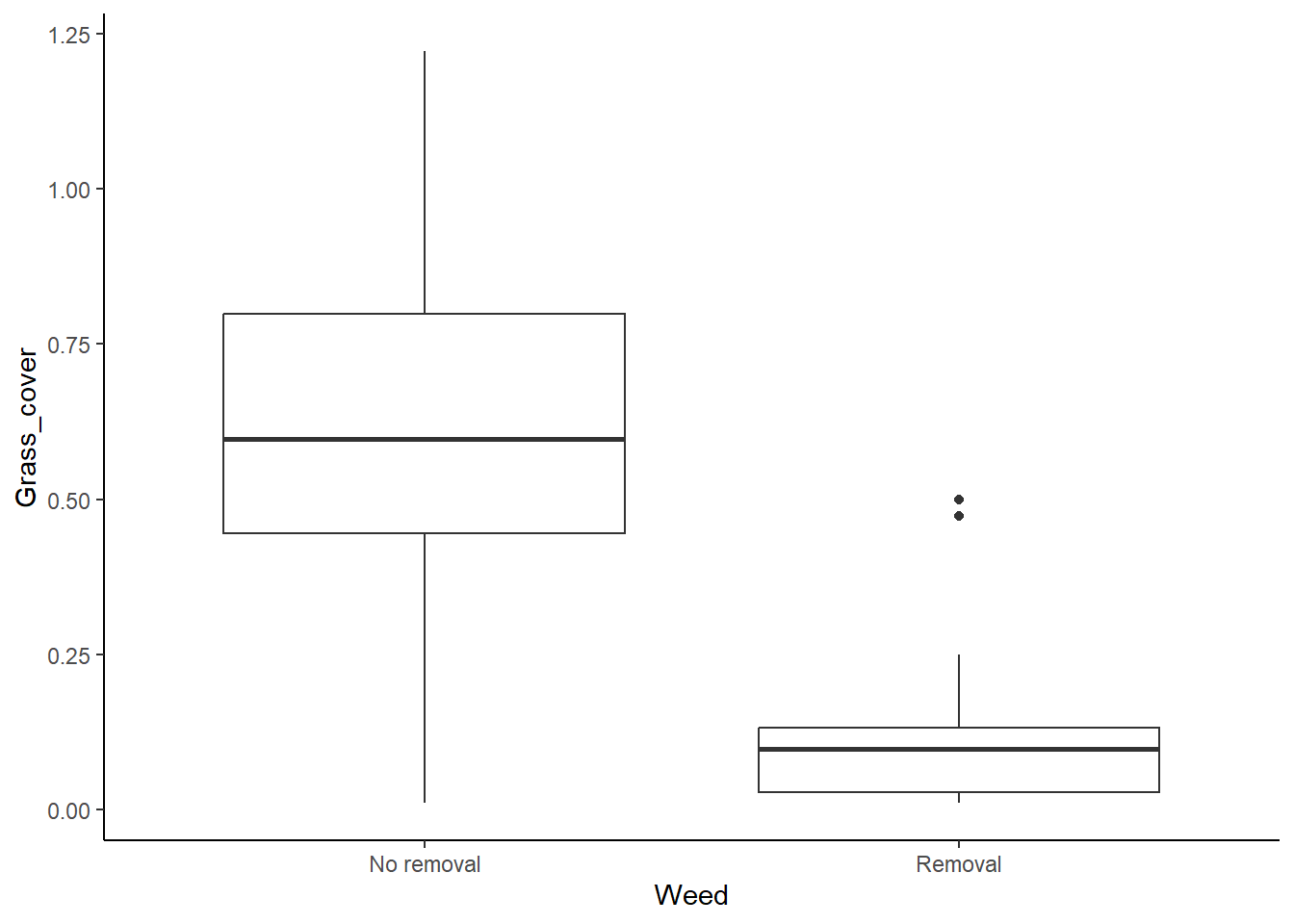

Graphs to visualize variance

We will start with a simple visual test: making a box plot to compare the variation between the different categories.

ggplot(grass, aes(x=Weed, y=Grass_cover)) +

geom_boxplot() +

theme_classic()

It looks like the variances are pretty different between the two groups, so we seem to have a problem here, but we will look at the results of a formal test for equal variances too.

Levene’s test

Levene’s test is a formal test that will check for equal variances. Note that is tends to be a bit sensitive, so it will sometimes pick up small differences in the variances that are not a problem, particularly if you have large sample sizes. With the Levene’s test, we are testing the null hypothesis that the variances are equal. Therefore if the p-value is less than 0.05, that suggests that the variances are not equal.

The function for the Levene’s test is in the car

package, so install (install.packages("car")) and load that

package first.

library(car)## Loading required package: carData## Warning: package 'carData' was built under R version 4.2.3Now we can run the test:

leveneTest(Grass_cover ~ Weed, data = grass)## Warning in leveneTest.default(y = y, group = group, ...): group coerced to

## factor.## Levene's Test for Homogeneity of Variance (center = median)

## Df F value Pr(>F)

## group 1 6.0694 0.01971 *

## 30

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1Don’t worry if you get a warning message. It is just telling you that when it ran the test, it converted “Weed” from a character variable to a factor variable. Take a look at the p-value for the test. Based on this p-value, are the variances equal?

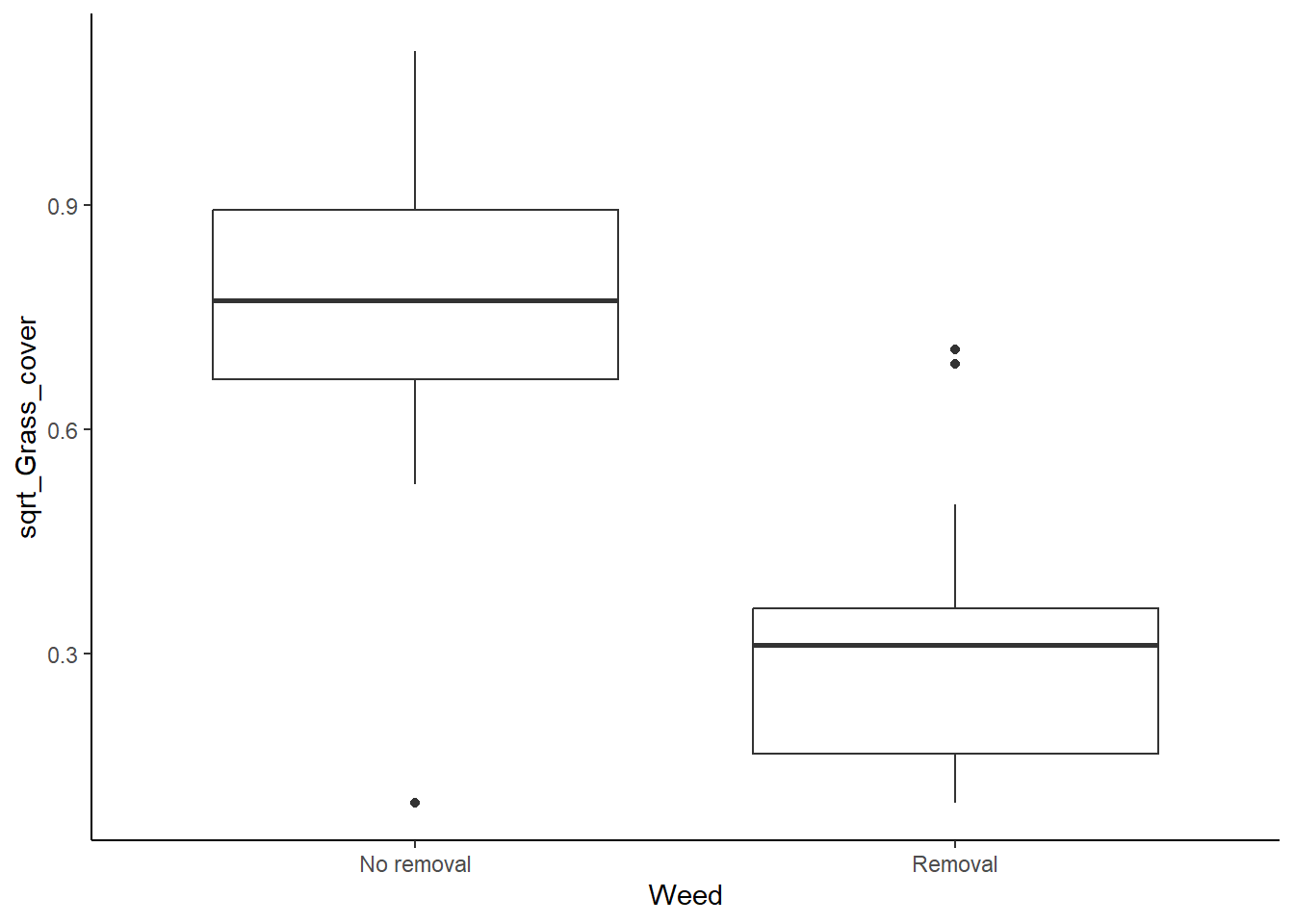

The square root transform

We have established that we don’t have equal variance between our categories, so what can we do? The first thing we can try is transforming our data. If our data are skewed (not normally distributed), a log transform might help. However, if our data have a pretty symmetrical distribution (which we already determined they do), a log transform is unlikely to help. Instead, we can try a square root transform. Let’s see if that helps here.

Like with the log transform, we will add a new variable to our data frame, but this time we will take the square root of our grass cover variable.

grass$sqrt_Grass_cover <- sqrt(grass$Grass_cover)Now, we can use our tests again to see if this helped.

Start with the boxplot:

ggplot(grass, aes(x=Weed, y= sqrt_Grass_cover)) +

geom_boxplot() +

theme_classic()

It looks a lot better now, but we will check our Levene’s test too.

leveneTest(sqrt_Grass_cover ~ Weed, data = grass)## Warning in leveneTest.default(y = y, group = group, ...): group coerced to

## factor.## Levene's Test for Homogeneity of Variance (center = median)

## Df F value Pr(>F)

## group 1 0.1745 0.6791

## 30Okay, it looks good, so our transform worked. We can now safely run a t-test using the square-root-transformed data.

Running the test

At this point, we can run the test with our square-root transformed

data. We can use the same t.test function that we learned

in the last lesson (or the lm function that we used when

saving the residuals in this lesson). Note, however, that we now want to

use the square-root-transformed variable (“sqrt_Grass_cover”) instead of

the raw grass cover variable.

grass_ttest_sqrt <- t.test(sqrt_Grass_cover~Weed, data=grass,var.equal=TRUE)

grass_ttest_sqrt##

## Two Sample t-test

##

## data: sqrt_Grass_cover by Weed

## t = 5.8639, df = 30, p-value = 2.041e-06

## alternative hypothesis: true difference in means between group No removal and group Removal is not equal to 0

## 95 percent confidence interval:

## 0.2846221 0.5888279

## sample estimates:

## mean in group No removal mean in group Removal

## 0.7529518 0.3162268Based on the p-value, would you reject the null hypothesis that weed removal does not affect end-of-season grass cover?

Mann-Whitney U test

The distribution of our residuals looked fine from the beginning, but

if we decided they were too skewed to run a regular t-test and a

transformation didn’t work, we could run a Mann-Whitney U test instead.

Remember, this test compares the rank of the data points between our two

groups. The code for this test is shown below. (The function name is

wilcox.test, but the Wilcox test for unpaired samples is

equivalent to the Mann-Whitney U test)

wilcox.test(Grass_cover~Weed,data = grass)## Warning in wilcox.test.default(x = DATA[[1L]], y = DATA[[2L]], ...): cannot

## compute exact p-value with ties##

## Wilcoxon rank sum test with continuity correction

##

## data: Grass_cover by Weed

## W = 231, p-value = 0.0001075

## alternative hypothesis: true location shift is not equal to 0Based on the rank test, we would still find a signifcant difference between the groups.

Welch’s tests

If the variances still aren’t equal after transforming the data, we can use a test, called the Welch’s test, that doesn’t assume equal variances. Even though our square root transform did work in this example, let’s practice how to do a Welch’s test.

To run the Welch’s test, we will use the same ‘t.test’ function that we used for a regular t-test, but we will change one argument. Instead of using the “var.equal=TRUE” setting, we will change it to “var.equal=FALSE”. Notice that we can run this test will the un-transformed Grass_cover variable because with this test, it is fine if the variances are not equal.

t.test(Grass_cover~Weed,data = grass,var.equal=FALSE)##

## Welch Two Sample t-test

##

## data: Grass_cover by Weed

## t = 5.8226, df = 22.549, p-value = 6.711e-06

## alternative hypothesis: true difference in means between group No removal and group Removal is not equal to 0

## 95 percent confidence interval:

## 0.3116919 0.6558079

## sample estimates:

## mean in group No removal mean in group Removal

## 0.6169443 0.1331944The output is similar to what you have seen before. You should see the t statistic, the degrees of freedom (df) and the p-value. Note that the df is a little different here that what you would get with a regular t-test. This is because we lose more degrees of freedom with the Levene’s test because we are calculating separate variances for each group.

Note that the conclusion you would draw based on the Welch’s test using the un-transformed grass cover data is the same conclusion you would draw using the regular t-test on the square root transformed grass data.